A Non-Coder's Real Guide to Building Things with AI

Something That Stopped Me in My Tracks

A while back, a Greek developer named Stavros published an article called “How I write software with LLMs.”

One line stuck with me. I read it three times:

One line stuck with me. I read it three times:

“I thought I liked programming. Turns out, I liked making things. Programming was just the means.”

That hit hard.

Because I’m exactly that person — can’t write code, but desperately wants to build things. Over the past year, I used AI to build an entire automated video production pipeline — from topic research to video generation to publishing. Every script, every API call, every piece of data processing was written by AI.

I never typed a single line of code by hand. But I understand every module’s logic better than anyone.

Today I want to break down everything I’ve learned — my own hard-won lessons plus real-world tactics from two top developers (Addy Osmani, a Google Chrome engineer, and Stavros mentioned above) — into a practical methodology that regular people can actually use.

No hype. No magic. Just: what works, what doesn’t, and how to maximize your odds.

Can AI Actually Write Decent Code?

Let’s start with data.

GitHub’s research shows that developers using Copilot complete tasks 55% faster. 84% of developers are already using or planning to use AI coding tools. Daily AI users ship 60% more pull requests than light users.

Sounds great, right? Hold on.

The same data also shows: 45% of developers say debugging AI-generated code sometimes takes longer than writing it from scratch.

That’s the real state of AI coding — it’s not magic, but for people who know how to use it, it’s genuinely transformative.

The key phrase: “know how to use it.”

Stavros nailed it in his article:

“The variance of results from person to person when using LLMs is enormous.”

Why do some people double their productivity while others think it’s garbage? It’s not the tool — it’s the method.

How the Pros Actually Use AI to Code

Rule #1: Write the Spec Before Writing Code

Google Chrome engineer Addy Osmani is blunt about this:

Don’t just tell AI: “Build me a thing.”

(Okay, we’ve all done it when we first started…)

His first step is always: write a detailed spec document with AI (he calls it spec.md) — what you’re building, data structures, edge cases, testing strategy.

Most people skip this step. You think you’re saving time. You’re actually digging yourself a hole.

If you tell AI “build me a budgeting app,” AI will definitely give you a bunch of code. But that code will probably be: features scattered everywhere, logic tangled, fix one thing and three others break.

The right approach —

Spend 15 minutes writing down what you want in plain language. The more specific, the better:

- Who’s this for?

- What are the core features? (Track expenses → categorize → monthly reports)

- Where’s the data stored? (Local? Cloud?)

- Does it need login?

- What does the minimum viable version look like?

Addy Osmani calls this “15 minutes of waterfall development” — quick but thorough planning that buys you hours of smooth execution.

In everyday terms: You don’t walk into a restaurant and say “give me some food.” You say “a bowl of lanzhou beef noodle, thin noodles, spicy, add an egg.” Talking to AI is the same idea.

Rule #2: One Thing at a Time

This is something Addy Osmani hammers repeatedly:

“LLMs do best when given focused prompts: implement one function, fix one bug, add one feature at a time.”

You can’t dump an entire app on AI and say “finish it.” What happens? Someone described it perfectly:

“Like 10 developers coding simultaneously but never speaking to each other.”

Pure chaos.

The right approach: break the big project into small steps. One at a time.

Step 1: Set up basic framework → test → pass Step 2: Implement core feature A → test → pass Step 3: Implement core feature B → test → pass …

Confirm each step works before moving to the next. Like building with blocks — one piece at a time.

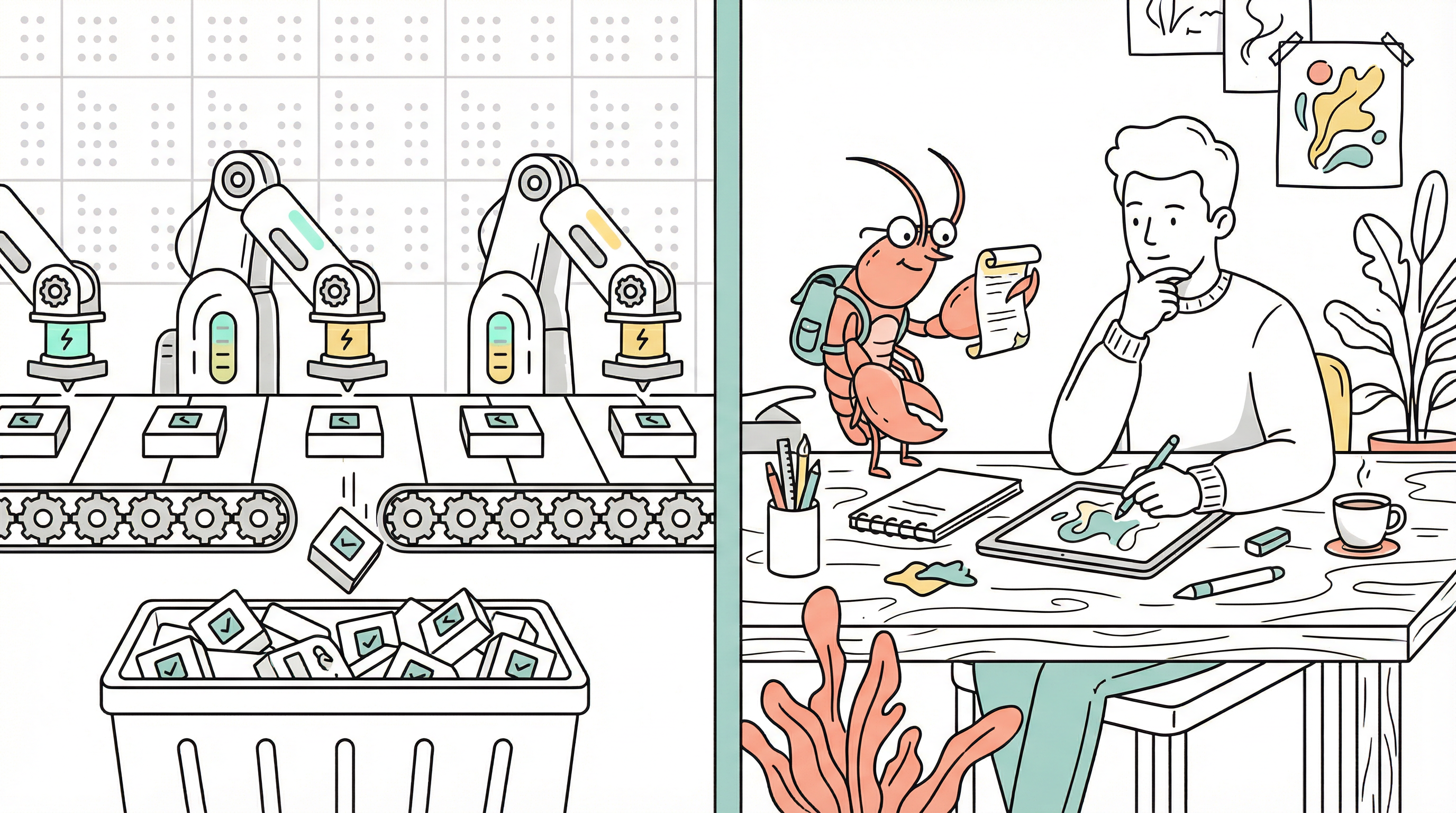

Rule #3: Have Different AIs Check Each Other’s Work

This is Stavros’s killer move — and the single most valuable tactic in my opinion.

His workflow looks like this:

- Architect (Claude Opus): Only designs the plan. No code. Maps out every file, every function.

- Developer (Claude Sonnet): Takes the plan and writes code. Fast and efficient.

- Reviewers (OpenAI Codex + Google Gemini + Claude Opus): Three AIs from different companies each review the code independently, hunting for bugs and logic flaws.

Why use AIs from different companies to review?

Because the same model reviewing its own code is like a student grading their own exam — you can’t see your own mistakes. Stavros calls this “self-agreement bias.”

Cross-reviewing with different models is like having three strangers peer-review your work. The odds of catching issues go way up.

In everyday terms: You proofread your own essay three times and think it’s perfect. You hand it to a friend, and they find typos everywhere. AI is the same — it can’t catch its own blind spots. You need “outsiders” to check.

Real Results from Real Non-Coders

Don’t think this is just a programmer thing. In 2025–2026, a ton of non-coders built real products with AI:

Ye Jianfeng — a liberal arts college student, zero coding experience. Used AI to build an emotional assessment mini-program. After launch, it hit over 1 million reads and earned him 12,000 RMB in two weeks.

Blake — a college grad who couldn’t code at all. Used ChatGPT to write Swift, built three apps in two years (an AI dating assistant, a looks-rating app, a calorie calculator), and broke $10 million in annual revenue.

Blogger GuoGuo from Chengdu — 31 years old, no coding background. “Dictated” requirements to AI in conversation and built a self-discipline habit-tracking app. In less than two months, 850 sales, nearly 9,000 RMB in revenue.

They all share one thing: They didn’t know code, but they knew exactly what they wanted to build.

Clear requirements + AI execution = a real product.

And the failures? Most of them died in one place: not knowing what they wanted, and expecting AI to figure it out for them.

AI is a tool, not a product manager.

What AI Can and Can’t Do

Save this table — you’ll probably need it later:

✅ AI is great at:

- Frontend pages (HTML/CSS/JavaScript)

- Backend APIs

- Data processing scripts

- Small tools and mini-programs

- Automation workflows

- Repetitive CRUD code

- Writing test cases

⚠️ AI can manage, but you need to supervise:

- Complex business logic (subtle logic errors are common)

- Database design (functional, but rarely optimal)

- Cross-system integration (edge cases get missed)

❌ AI still can’t do well:

- Complex system architecture (this is where human engineers are irreplaceable)

- High-security systems (payments, healthcare, finance — AI code has a 70% security vulnerability rate)

- Large-scale legacy code maintenance (lacks business context understanding)

- Performance micro-optimization (AI code “runs but isn’t fast”)

Stavros put it perfectly:

“In technical areas I know (like backend), AI’s code quality is higher than what I’d write by hand. But in areas I don’t know (like mobile), it quickly turns into a mess.”

Translation: In domains you understand, AI is an amplifier. In domains you don’t, AI is a magnifying glass — magnifying your ignorance.

A Five-Step Method Anyone Can Use Right Now

If you’re a non-coder and want to build a small tool or product with AI, follow this workflow for the best success rate:

Step 1: Know What You Want (20 minutes)

Open a document and write in plain language:

- Who’s this for?

- What are the core features? (3 max)

- What does the simplest version look like?

- What’s a reference product? (screenshots or links)

Step 2: Build a Spec with AI (30 minutes)

Send your Step 1 notes to AI (Claude or ChatGPT both work) and have it fill in the gaps:

- “Help me organize this idea into a detailed requirements doc”

- “What edge cases haven’t I thought of?”

- “What should the MVP include?”

Step 3: Have AI Create a Dev Plan (10 minutes)

Take the spec and have AI break development into 5–10 small steps. Each step independently testable.

Step 4: Execute Step by Step (the core work)

Follow the plan one step at a time. After each step, test it, confirm it works, then move on. Hit a bug? Paste the error message straight into AI and let it fix it.

Step 5: Cross-Review with a Different AI (20 minutes)

After all features are done, paste the code into a different AI (e.g., if you used Claude to write it, have ChatGPT review it) and ask it to find bugs and security issues.

This method won’t guarantee you build the next TikTok. But building a working small tool, mini-program, personal website, or automation script? Totally doable.

My Honest Take on “Vibe Coding”

In early 2025, OpenAI founding member Andrej Karpathy coined the term “Vibe Coding” — the idea that you don’t need to read code, just describe what you want, look at the result, and if it feels right, ship it.

The concept went viral.

But honestly, it also misled a lot of people.

Many people understood Vibe Coding as: never look at the code, just trust whatever AI gives you.

The result? A bunch of people pushed code full of security vulnerabilities to production. By 2026, AI-generated code was already responsible for one in five security breach incidents.

My first AI coding tool was Cursor, and wow — a whole new world: What? I just describe what I want and watch code appear line by line? Amazing! But before long, I fell into the loop of “the same bug going back and forth” and “AI keeps saying it found the problem but nothing actually gets fixed.” I’m embarrassed to admit I pulled a few all-nighters, addicted to the process but not actually shipping anything. I tried Windsurf along the way, then eventually landed on Claude Code and slowly learned how to communicate with the model. I stopped saying “build me a CMS” and started breaking projects into small pieces, giving it enough context, taking baby steps.

One more tip: I’m a content creator — mainly video. So my needs are actually very predictable: topic research, scripts, prompts, asset generation, music generation, editing. I decided to build each task as a standalone script first, get it running reliably, and then stitch everything together into a pipeline. Much more stable than trying to build the whole thing at once.

Vibe Coding doesn’t mean ignoring the code. The correct interpretation is: You don’t need to write every line by hand, but you must understand what the code is doing.

Like cars — you don’t need to know how to build one, but you need to know how to drive, and when to hit the brakes.

Stavros says he’s “never read most of his own project code,” but he’s “intimately familiar with the architecture and inner workings of every project.” That’s the critical difference — not reading code ≠ not understanding what the code does.

Final Thoughts

AI coding in 2026 is neither the utopia of “everyone’s a programmer now” nor the apocalypse of “all programmers are obsolete.”

It’s more like a tool revolution — the way Excel gave everyone the ability to do data analysis, AI coding gives everyone the ability to turn ideas into working products.

But tools are just tools. People who know what they want will build amazing things with AI. People who don’t will just get beautiful garbage.

Know what you want. Communicate it clearly. Take it step by step. Get someone else to check your work.

Sixteen words. Good for a lifetime.

Know what you want, say it clearly, build step by step, get it checked — that’s the entire secret of AI coding in 2026.

Thanks for reading.